Welcome back folks!

Like many of my fellow veteran men and women in tech, I’ve been putting in the effort to evolve my skill set and embrace the industry’s shift to a more code focused world. Those of us who came from the “rack and stack” generation did some scripting here and there where it workable using VB, Bash, Batch, Perl, or the many other languages who have had their time in the limelight. The concept of a development lifecycle and code repository typically consisted of a few permanently open Notepad instances or if you were really fancy, scripts saved to a file share with files labeled v1, v2, and so on. Times have changed we must change with them.

Over the past two years I’ve done significantly more coding. These efforts ranged from creating infrastructure using Microsoft ARM (Azure Resource Manager) and AWS CloudFormation templates to embracing serverless with Azure Functions and AWS Lambdas. Through this process I’ve quickly realized that the toolsets available to manage code and its lifecycle have evolved and gotten more accessible to us “non-developers”.

I’m confident there are others like myself out there who are coming from a similar background and I wanted to put together a post that might help others begin or move forward with their own journeys. So for this post I’m going to cover how to setup a Visual Studio Code environment on a Mac for developing code using Python.

With the introduction done, let’s get to it!

First up you’ll need to get Python installed. The Windows installation is pretty straightforward and can be downloaded here. Macs are a bit tricker because OS X ships with Python 2.7 by default. You can validate this by running python –version from the terminal. What this means is you’ll need to install Python 3.7 in parallel. Thankfully the process is documented heavily by others who are far more knowledgable than me. William Vincent some wonderful instructions.

Once Python 3.7 is installed, we’ll want to setup our IDE (integrated development environment). I’m partial to VSC (Visual Studio Code) because it’s free, cross platform, and simple to use. Installation is straightforward so I won’t be covering those steps.

Well you have your interpreter and your IDE but you need a good solution to store and track changes to the code you’re going to put together. Gone are the days of managing it by saving copies (if you even got that far) to your desktop and arrived are the days of Git. You can roll your own Git service or use a managed service. Since I’m a newbie, I’ve opted to go mainstream and simple with Github. A free account should more than suffice unless you’re planning on doing something that requires a ton of collaboration.

Now that your account is setup, let’s go through the process of creating a simple Python script, creating a new repository, committing the code, and pushing it up to Github. We’ll first want to create a new workspace in VSC. One of the benefits of a workspace is you can configure settings on a per project basis vs modifying the settings of the VSC as a whole.

To do this open VSC and create a new empty file using the New file shortcut as seen below.

Once the new window is opened, you can then choose Save Workspace as from the File context menu. Create a new directory for the project (I’ll refer to this as the project directory) and save the workspace to that folder. Create a subfolder under the workspace (I’ll refer to this as the working directory).

We’ll now want to initialize the local repository. We can do this by using the shortcut Command+Shift+P which will open the command pallet in VSC. Search for Git, choose Git: Initialize Repository, and select the working directory. You’ll be prompted to add the folder to the workspace which you’ll want to do.

VSC will begin tracking changes to files you put in the folder and the Source Control icon will now be active.

Let’s now save the new file we created as hello-world.py. The py extension tells VSC that this is Python code and you’ll yield a number of benefits such as IntelliSense. If you navigate back to the Source Control you’ll see there are uncommitted changes from the new hello-world.py file. Let’s add the classic line of code to print Hello World. To execute the code we’ll choose the Start Without Debugging option from the Debug context menu.

The built in Python libraries will serve you well, but there are a TON of great libraries out there you’ll most certainly want to use. Wouldn’t it be wonderful if you could have separate instances of the interpreter with specific libraries? It comes the awesomeness of virtual environments. Using them isn’t required but it is best practice in the Python world and will make your life a lot easier.

Creating a new virtual environment is easy.

- Open a new terminal in Visual Studio Code, navigate to your working directory, and create a new folder named envs.

- Create the new virtual environment using the command below.

python3 -m venv ./envs

You’ll now be able select the virtual environment for use in the bottom left hand corner of VSC as seen below.

After you select it, close out the terminal window and open a new one in VSC by selecting New Terminal from the Terminal context menu. You’ll notice the source command is run to select the virtual environment. You can now add new libraries using pip (Python’s package manager) as needed and they will be added to the virtual environment you created.

If you go back to the source control menu you’ll notice there a whole bunch of new files. Essentially Git is trying to track all of the files within the virtual environment. You’ll want to have Git ignore it by creating a file name .gitignore file. Within the file we’ll add two entries, one for the ignore file and one for the virtual environment directory (and a few others if you have some hidden files like Mac’s .DS_Store).

Let’s now commit the new file hello-world.py to the local repository. Accompanying the changes, you’ll also add a message about what has changed in the code. There is a whole art around good commit messages which you can research on the web. Most of my stuff is done solo, so it’s simple short messages to remind me of what I’ve done. You can make your Git workflows more sophisticated as outlined here, but for very basic development purposes a straight commit to the master works.

Now that we have the changes committed to our local repository, let’s push them up to a new remote repository in Github. First you’ll want to create an empty repository. To add data to the repo, you’ll need to authenticate. I’ve added two-factor authentication to my Github account, which it doesn’t look like Visual Studio Code supports at this time. To work around the limitation you can create personal access tokens. Not a great solution, but it will suffice as long as you practice good key management and create the tokens with a limited authorization scope and limit their lifetime.

Once your repository is set and you’ve created your access token, you can push to the remote repository. In Visual Studio Code run Command+Shift+P to open the command pallet and find Git: Add Remote command to add the repository. Provide a name (I simply used origin, seems like the common name) as the name and provide the URL of your repository. You’ll then be prompted to authentication. Provide your Github username and the personal access token for the password. Your changes will be pushed to the repository.

There you have it folks! I’m sure there are better ways to orchestrate this process, but this is what’s working for me. If you have alternative methods and shortcuts, I’d love to hear about them.

Have a great week!

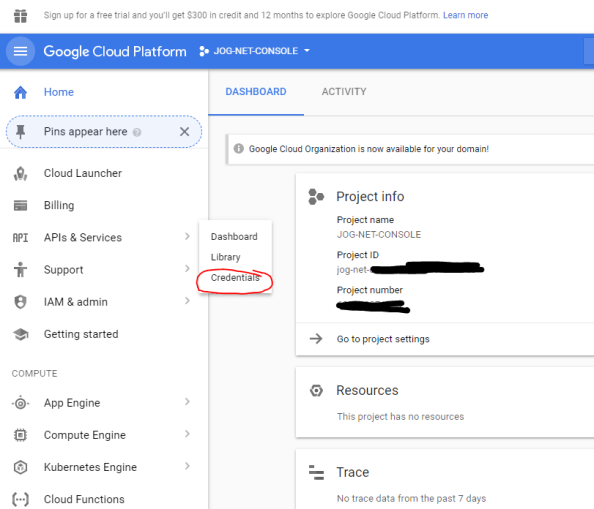

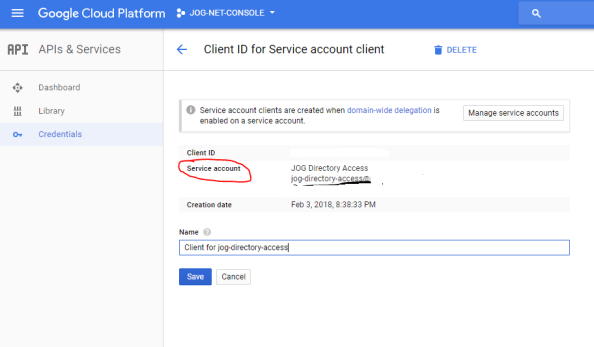

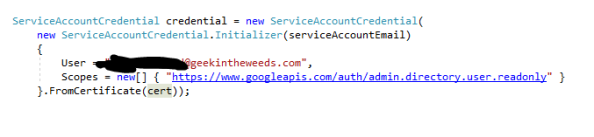

This identifier is one of the pieces of information I’ll need to include in the JSON Web Token (JWT) I’m going to send to Google’s API to obtain an access token. In addition to the service account identifier, I also need the scopes I want to access, Google Authorization Server endpoint, G-Suite user I’m impersonating, the expiration time I want for the access token (maximum of one hour), and the time the assertion was issued.

This identifier is one of the pieces of information I’ll need to include in the JSON Web Token (JWT) I’m going to send to Google’s API to obtain an access token. In addition to the service account identifier, I also need the scopes I want to access, Google Authorization Server endpoint, G-Suite user I’m impersonating, the expiration time I want for the access token (maximum of one hour), and the time the assertion was issued.

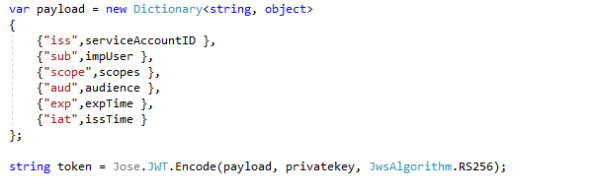

At this point I have all the components I need to assemble my JWT. For that I’m going to add the

At this point I have all the components I need to assemble my JWT. For that I’m going to add the

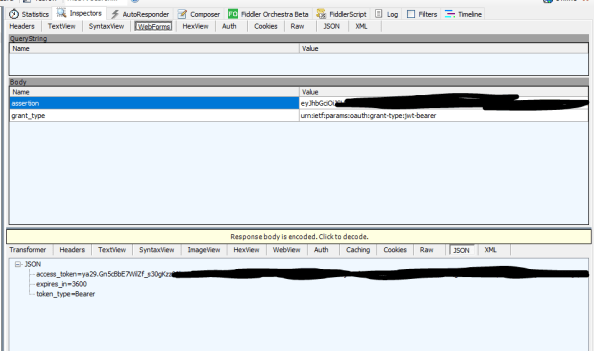

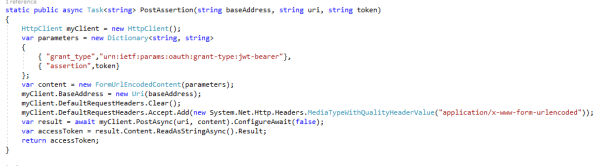

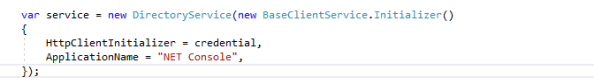

I now have my JWT assembled and am ready to deliver to Google for an access token request. The next step is to submit it to Google’s authorization server. For that I need to a do a few things. The first thing I need to is create a new class that will act as the data model for JSON response Google will return to me after I submit my authorization request. I’ll deserialize the JWT I receive back using this data model.

I now have my JWT assembled and am ready to deliver to Google for an access token request. The next step is to submit it to Google’s authorization server. For that I need to a do a few things. The first thing I need to is create a new class that will act as the data model for JSON response Google will return to me after I submit my authorization request. I’ll deserialize the JWT I receive back using this data model.

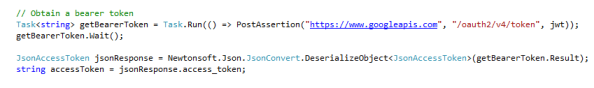

I then call the new task, pass the appropriate parameters, deserialize the JSON response using the data model I created earlier, and dump the bearer access token into a string variable.

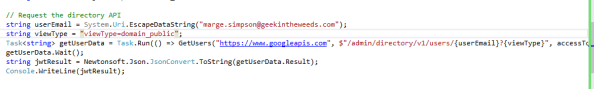

I then call the new task, pass the appropriate parameters, deserialize the JSON response using the data model I created earlier, and dump the bearer access token into a string variable. So I have my bearer access token that allows me to impersonate the user within my G-Suite domain for the purposes of hitting the Google Directory API. I whip up another task that will deliver the bearer access token to the Google Directory API and the JSON response which will include details about the user.

So I have my bearer access token that allows me to impersonate the user within my G-Suite domain for the purposes of hitting the Google Directory API. I whip up another task that will deliver the bearer access token to the Google Directory API and the JSON response which will include details about the user. Finally I call the task and dump the JSON response to the console.

Finally I call the task and dump the JSON response to the console. The results look like the below.

The results look like the below.

Session 1 and 2 display the submission of the JWT and acquisition of the bearer token.

Session 1 and 2 display the submission of the JWT and acquisition of the bearer token.