This is part of my series on GenAI Services in Azure:

- Azure OpenAI Service – Infra and Security Stuff

- Azure OpenAI Service – Authentication

- Azure OpenAI Service – Authorization

- Azure OpenAI Service – Logging

- Azure OpenAI Service – Azure API Management and Entra ID

- Azure OpenAI Service – Granular Chargebacks

- Azure OpenAI Service – Load Balancing

- Azure OpenAI Service – Blocking API Key Access

- Azure OpenAI Service – Securing Azure OpenAI Studio

- Azure OpenAI Service – Challenge of Logging Streaming ChatCompletions

- Azure OpenAI Service – How To Get Insights By Collecting Logging Data

- Azure OpenAI Service – How To Handle Rate Limiting

- Azure OpenAI Service – Tracking Token Usage with APIM

- Azure AI Studio – Chat Playground and APIM

- Azure OpenAI Service – Streaming ChatCompletions and Token Consumption Tracking

- Azure OpenAI Service – Load Testing

Hello again folks!

Today, I’m going to be posting my first post in a series on Azure AI Studio. I’ll let the true AI professionals give you the gory details and features of the service. The way my small brain thinks of the service is a platform built on top of AML (Azure Machine Learning) to make building applications that use Generative AI more developer-friendly. You can build and test applications, deploy third-party models, and organize applications into “projects” which can be secured to a specific project team but share resources across an organization via the concept of a hub. I’ll cover more on those pieces in a future blog post, but for today I want to focus on a pattern I was messing around that I think would be appealing to most folks.

One of the neat features of AI Studio is the Chat Playground. The Chat Playground is a web interface for interacting with models you have deployed to Azure AI Studio. You can send prompts and receive completions, adjust parameters such as temperature, and even get a code sample of the code being run by the web interface. The models that can e deployed include OpenAI models deployed to an AOAI (Azure OpenAI Service) instance or third-party models like Meta’s Llama deployed to a serverless endpoint or self-managed compute (in AML called managed online endpoint). For the purposes of this post I’m going to be focusing on OpenAI models deployed to an AOAI instance.

You’re probably looking at this and thinking, “Yeah that is cool… a similar functionality exists in Azure OpenAI Studio and it does the same thing.” That’s correct it does, but for many organizations using the Azure OpenAI Studio’s Chat Playground isn’t an option for a number of different reasons both operational and security-related.

From an operational perspective, the Azure OpenAI Studio’s Chat Playground is designed to communicate directly with the endpoint for an AOAI instance. As I’ve covered in previous posts, this can be problematic. One reason is you’re limited to the quota within the instance which could cause you to hit limits quickly if you direct a whole ton of users to it. Typically, you will load balance across multiple instances deployed to multiple regions across multiple subscriptions as I discuss in my post on load balancing AOAI. The other problem is dealing with internal chargebacks. If I have multiple BUs (business units) hammering away at an instance, I don’t have any easy to determine who which folks in what BU consumed what. While metrics are token usage are captured in the metrics streamed from an instance, there is no way to associate that usage with an individual.

On the security side, communicating directly with the AOAI instance means I can’t review the prompts and responses being sent and received by the service. Many regulated organizations have requirements for these to be captured for review to ensure the service is being used appropriately and sensitive data isn’t being sent that hasn’t been approved to be sent. Additionally, availability of the AOAI instance could be affected by one user going nuts and consuming the full quota.

The challenges outlined above have driven many customers to insert a control point. The industry seems determined to coin this architectural component a Gen AI Gateway so I’ll play along. For you fellow old folks, all a Gen AI Gateway really is an API Gateway with some Gen AI-related features slapped on top of it. It sits between the front-facing user application and the models processing the prompts and responses. The GenAI-specific features available within the gateway help to address the operational and security challenges I’ve outlined above. If you’re curious about the specifics on this, you can check out my post on load balancing, logging, tracking token usage, rate limiting, and extracting useful information from the conversation such as prompts and responses.

In the image above I’ve included an example of how APIM (Azure API Management) could be used to provide such functionality. Within the customer base I work with at Microsoft, many customers have built something that functions similar to what you see above. A design like this helps to address the operational and security challenges I’ve outlined above.

Wonderful right? Now what the **** does this have to do with AI Studio’s Chat Playground? Well, unlike the Azure OpenAI Studio’s Chat Playground, AI Studio’s offering does support modifying the endpoint to point to your generative AI gateway. How you do this isn’t super intuitive, but it does work. Whether you go this route is totally up to you. Ok, disclaimer is done, let’s talk about how you do this.

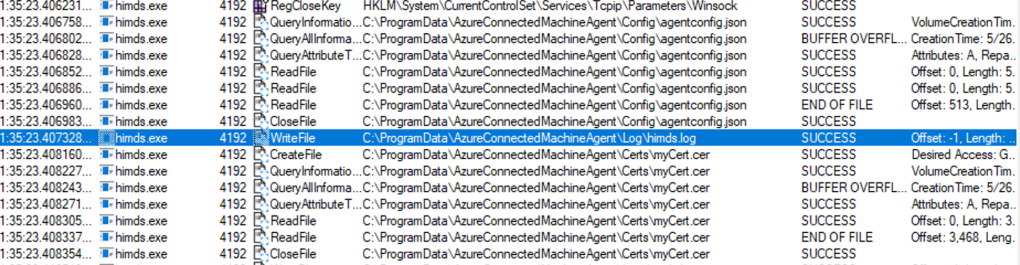

One thing to understand about using AI Studio’s Chat Playground is it works the same way that Azure OpenAI Studio’s version works in regards to where the TCP connections are sourced from when making calls to the model. As can be seen in the Fiddler capture below, the TCP connections made when you submit a prompt from the Chat Playground are sourced from the user’s endpoint.

This makes our life much easier because we likely control the path that user’s packet takes and the DNS the user uses which means we can direct that user’s packet to a Gen AI Gateway. For the purposes of this post, my goal is to funnel these prompts and completions through an APIM instance I have in place which has some APIM policy snippets that do some checks and balances and a call a small app (based off an awesome solution assembled by my buddy Shaun Callighan) which logs prompts and responses and calculates token metrics. The data processed by the app are then sent to an Event Hub, processed by Stream Analytics, and dumped into CosmosDB.

When you want to connect to an AOAI instance from AI Studio’s Chat Playground you add it as a connection. These connections can created at the hub level (think of this as a logical container for the projects) and then shared across projects. When adding the connection you can browse for the instance you want to connect to or enter manually.

If you were to do that you won’t be able to create a deployment of a model or access a deployment of a model deployed in the instances behind it. This is because AI Studio is making calls to the Azure management plane to enumerate the deployments within the instance. Since there isn’t an AOAI with the hostname of your AOAI instance, you’ll be unable to add deployments or pick a deployment from the Chat Playground.

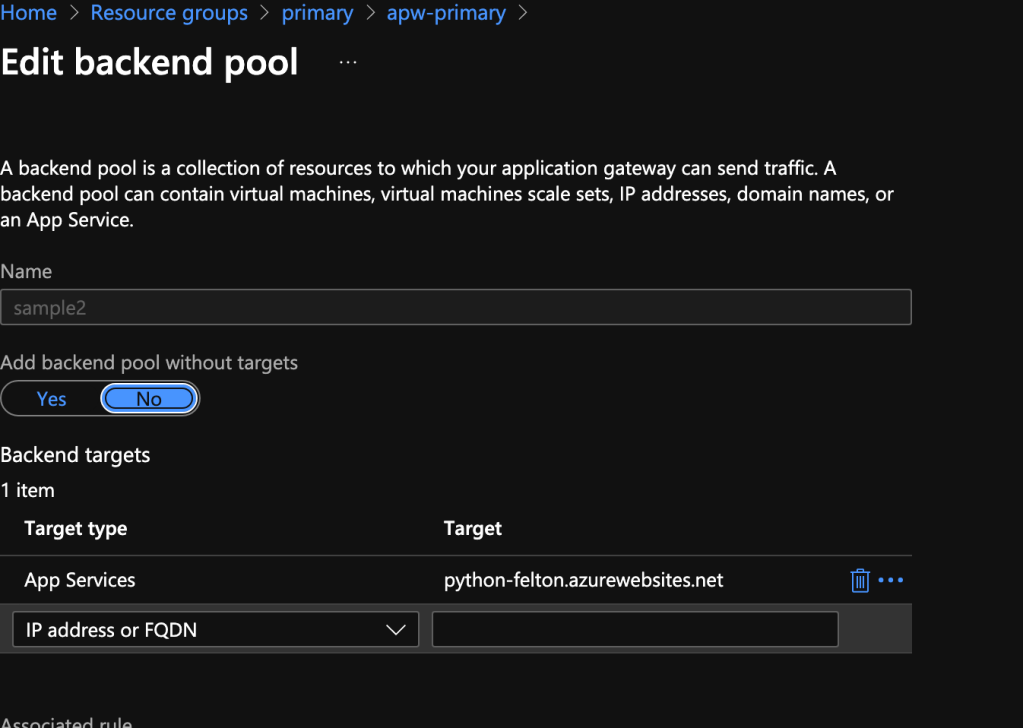

To work around this, you need to add a connection to one of your AOAI instances. This will be your “stub” instance that we’ll modify the endpoint of to point to API Management. If you’re load balancing across multiple AOAI instances behind APIM, you need to ensure that you’ve already created your model deployments and you’ve named them consistently across all of the AOAI instances you’re load balancing to. In the image below, I modify the endpoint to point to my APIM instance. The azure-openai-log-helper path is added to send it to a specific API I have setup on APIM that handles logging. For your environment, you’ll likely just need the hostname.

Now before you go running and trying to use the Chat Playground, you’ll have to make a change to the APIM policy. Since the user’s browser is being told to make the call to this endpoint from a different domain (AI Studio’s domain) we need to ensure there is a CORS policy in place on the APIM instance to allow for this, otherwise it will be blocked by APIM. If you forget about this policy you’ll get a back a 200 from the APIM instance but nothing will be in the response.

Your CORS policy could look like the below:

<cors>

<allowed-origins>

<origin>https://ai.azure.com/</origin>

<origin>https://ai.azure.com</origin>

</allowed-origins>

<allowed-methods preflight-result-max-age="300">

<method>POST</method>

<method>OPTIONS</method>

</allowed-methods>

<allowed-headers>

<header>authorization</header>

<header>content-type</header>

<header>request-id</header>

<header>traceparent</header>

<header>x-ms-client-request-id</header>

<header>x-ms-useragent</header>

</allowed-headers>

</cors>Once you’ve modified your APIM policy with the CORS update, you’ll be good to go! Your requests will now flow through APIM for all the GenAI Gateway goodness.

When messing with this I ran into a few things I want to call out:

- Do not forget the CORS policy. If you run into a 200 response from APIM with no content, it’s probably the CORS snippet.

- If you have a validate-jwt snippet in your APIM policy that includes validating the claim includes cognitivesservices, remove that. The claim passed by AI Studio includes a trailing forward slash which won’t likely match what you get back if you’re using the MSAL library in code. You could certainly include some logic to handle it, but honestly the security benefit is so little from checking the claim just make it easy on yourself and remove the check for the claim. Keep the check that validate-jwt snippet but restrict it to checking the tenant ID in the token.

- Chat Playground will pass the content property as the prompt as an array (this is the more modern approach to allow for multi-modal models like GPT-4o which can handle images and audio). If you have an APIM policy in place to parse the request body and extract information you’ll need to update it to also handle when content is passed as an array.

- Chat Playground allows for the user to submit an image along with text in the prompt. Ensure your APIM policy is capable of handling prompts like that. Dealing with human users being able to submit images to an LLM and ensuring you’re reviewing that image for DLP and calculating token consumption for streaming Chat Completion is a whole other blog topic that I’m not going to do today. Key thing is you want to account for that. Block images or ensure your policy is capable of handling it if you’re deploying 4o or 4 Vision.

Well folks that sums up this post. I realize this solution is a bit funky, and I’m not gonna tell you to use it. I’m simply putting it out there as an option if you have a business need strong enough to provide a ChatGPT-style solution but don’t have the bandwidth or time to whip up your own application.

Enjoy!