Hello again fellow geeks.

Last year Microsoft announced the general availability of Private Endpoint support for App Services. This was a feature I was particularly excited about because when combined with regional Vnet integration, it offered an alternative to an ASE (App Service Environment) for customers who wanted to run internally-facing applications or who didn’t feel comfortable exposing their publicly facing application directly via a public IP.

Over the past year I created a few different labs that demonstrated these features including a web app and function app. Each lab was built around a hub and spoke architecture where the application was serving as an internally-facing application. Given my recent post on Azure Firewall Premium, and the fact I still had the lab environment up and running, I thought it would be interesting to switch the virtual machine out and switch App Services in, which resulted in the lab environment pictured below.

I established the following goals for the lab environment:

- Perform IDPS on traffic to the web app

- Mediate and inspect traffic initiated from the web app to third-party APIs

- Expose a web application to the Internet without giving it a direct public IP address

To accomplish these goals I was able to re-use much of the pattern I described in my last post.

The first step in the process was to create a very simple hub and spoke architecture as pictured above. The environment would consist of a transit Vnet (virtual network), shared services Vnet, and spoke Vnet. The transit Vnet would contain the guts of the solution with an Azure Firewall Premium SKU instance and Azure Application Gateway v2 instance. In the shared services Vnet I used a Windows Server VM (virtual machine) running the DNS Server service and providing DNS resolution for the environment. I could have taken a shortcut here and used Azure Firewall’s DNS proxy capability, but I like to leave the options open for conditional forwarding which Azure Firewall and Azure Private DNS do not support at this time. Finally, in the spoke Vnet I created two subnets. One subnet hosted the private endpoint for App Services and the other subnet was delegated to App Services to support regional Vnet integration.

Once all components were in place, I deployed a simple Python web application I wrote. All it does it queries two public APIs, one for the current time and the other for a random Breaking Bad quote (greatest show of all time!). I took the cheating way out and deployed the app directly via Visual Studio using the Azure App Service add-in prior to deploying the private endpoint and regional Vnet integration capabilities. This allowed me to test the app to validate it was still working as intended while also allowing me to deploy the app from my home machine. Deploying a private endpoint for app services locks down not only access to the running application but also to the SCM (source control management) endpoint that is used for deploying code to the app.

The next step was to create the Azure Private DNS Zone for app services which is named privatelink.azurewebsites.net. I then linked this zone to my shared services Vnet and setup the DNS server running in that Vnet with a standard forward to 168.63.129.16. This setup allows DNS queries made to the Private DNS zone to be resolved by the DNS server. I’ve written extensively about how DNS works with Azure Private Link so I won’t go into any more detail on that flow. Last step in the DNS process is to configure each Vnet to use the DNS server IP in its DNS Server settings. This also needs to configured directly on the Azure Firewall.

Now that the infrastructure would be able to resolve queries to the Private DNS Zone used by App Services, it was time to create the private endpoint for the web app. This registered the appropriate DNS records in the Private DNS Zone for my web app. With the private endpoint created, the last step was to enable Vnet integration.

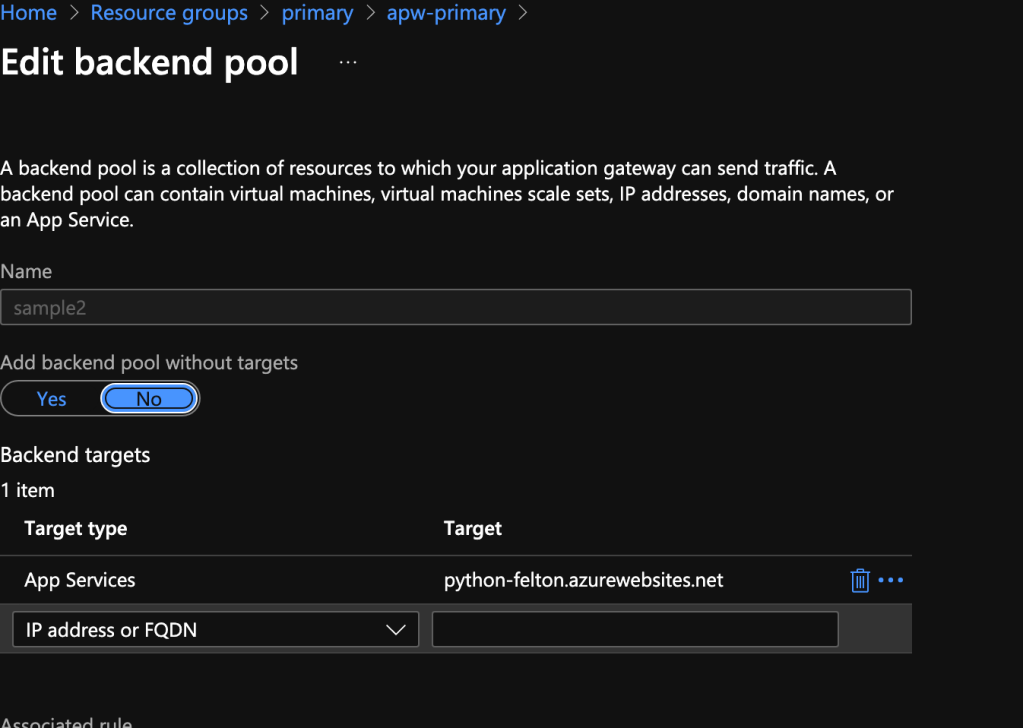

At this point I had all the guts of my solution and it was time to configure traffic to flow the way I wanted it to flow. Before I could begin work in Azure I needed to do the work outside of Azure. This involved creating an A record in my DNS hosting provider to point the public DNS name of my web app to the AGW’s (Application Gateway) public IP. This name can be whatever you want as long as you provide a certificate to the AGW such that it will identify itself as that name to the user. The AGW configuration is very straightforward and this tutorial will get you most of the way there. One thing to note is you’ll need to set the backend to point to the name given to the app service. This allows you to take advantage of the certificate Microsoft automatically provisions to the given app service.

Since I wanted to route traffic destined for the web app through the Azure Firewall Premium instance, I needed to ensure the AGW trusted the certificate served up by Azure Firewall. This is done by modifying the HTTP setting used in the AGW rule for the app. Here you can upload the root certificate that issued the Azure Firewall Premium intermediate certificate.

Now that the AGW is configured, I needed to create a route table for the subnet the AGW has its private IP address in. In this route table I disabled BGP (border gateway protocol) propagation to ensure the default route since this AGW v2 requires a default route pointing directly to the Internet. Now this is where it gets interesting from a routing perspective. Whenever you create a private endpoint for a service, a system route is added to the route table of all the subnets within the private endpoint’s vnet (virtual network) as well as any vnet the private endpoint’s vnet is peered with. If I want traffic from the AGW to route through the Azure Firewall, I need to override that system route with a /32 UDR. As you can imagine, this can become extremely tedious at scale and even risks hitting the max routes per route table depending on the scale we’re talking about. On the positive side, the issues around this are something Microsoft is aware of so hopefully that means this will be addressed at some point. In the meantime you’ll need to use the /32 route in a pattern such as this.

Azure Firewall Premium needs to be configured to allow traffic from the AGW to the web app and inspect this traffic. You can check out my last post for instructions on configured Azure Firewall to perform these activities.

Excellent, work is done right? Nope! If we influence routing on one side, we have to ensure the other side routes the same way. Toss a route table on the private endpoint subnet you say? Sorry, that isn’t supported my friend. Instead I needed to enable the Azure Firewall instance to SNAT (source NAT) traffic to the spoke Vnet. This ensures routing is symmetric and will eliminate any potential connection issues by creating only the UDR on the AGW subnet.

Lastly, I needed to create and apply a route table to the subnet I delegated to App Services for regional Vnet integration. This route table can be very simple and be configured with a single UDR for the default route pointing to the Azure Firewall private IP. This results in the traffic flow below:

This one was another fun pattern to solve. I got a chance to mess around more with AGW and also get a pattern I had theorized would work actually working. I find implementing the pretty pictures I draw helps drive home the benefits and considerations of such a pattern. With this pattern specifically, it suffers from similar considerations as the pattern in my last post. These include:

- Challenges with observability

- Operational overhead of certificate management

- Possible latency issues depending on latency requirements and traffic patterns

This pattern tacks on more operational overhead by requiring that /32 route be added to the AGW route table each time a new app service is provisioned with a private endpoint. Observability further suffers when tracing a packet flow end-to-end due to the additional layer of SNAT required via Azure Firewall. One thing to note about this is you may be able to get around the SNAT requirement for web-specific traffic because of the transparent proxy functionality behind Azure Firewall application rules. I want to highlight I have not tested this myself and I typically tend to SNAT for this use case in my lab because many of my customers may use similar patterns but with a 3rd party firewall.

So there you go folks, another pattern to add to your inventory. Hopefully we’ll see the /32 issue issue private endpoints resolved sometime in the near future. Have a great weekend!