Updates:

7/2025 – Updated to remove mentions of DNS query logging limitations with Private DNS Resolver due to introduction of DNS Security Policies

This is part of my series on DNS in Microsoft Azure.

- DNS in Microsoft Azure – Azure-provided DNS

- DNS in Microsoft Azure – Azure Private DNS

- DNS in Microsoft Azure – Azure Private DNS Resolver

- DNS in Microsoft Azure – PrivateLink Private Endpoints

- DNS in Microsoft Azure – PrivateLink Private Endpoints and Private DNS

- DNS in Microsoft Azure – Private DNS Fallback

- DNS in Microsoft Azure – DNS Security Policies

Hello again!

In this post I’ll be continuing my series on Azure Private Link and DNS with my 5th entry into the DNS series. In my last post I gave some background into Private Link, how it came to be, and what it offers. For this post I’ll be covering how the DNS integration works for Azure native PaaS services behind Private Link Private Endpoints.

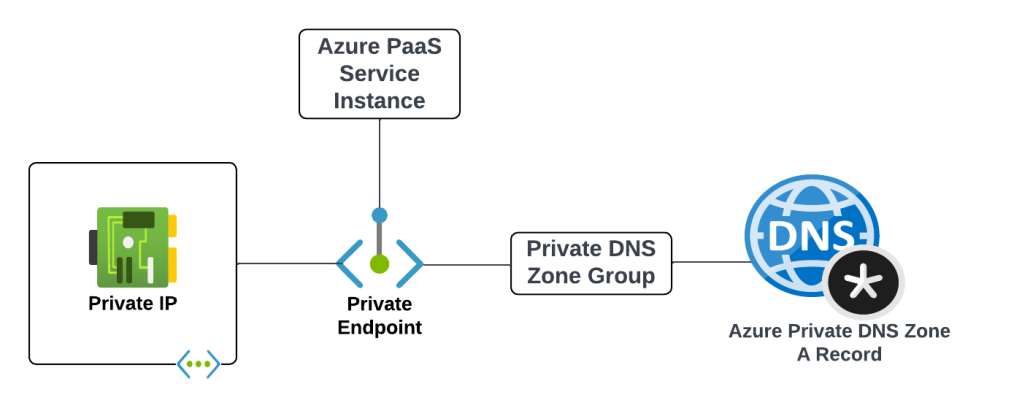

Before we get into the details of how it all works let’s first look at the components that make up an Azure Private Endpoint created for an Azure native service that is integrated with Azure Private DNS. These components include (going from left to right):

- Virtual Network Interface – The virtual network interface (VNI) is deployed into the customer’s virtual network and reserves a private IP address that is used as the path to the Private Endpoint.

- Private Endpoint – The Azure resource that represents the private connectivity to the resource establishes the relationships to the other resources.

- Azure PaaS Service Instance – This could be a customer’s instance of an Azure SQL Server, blob endpoint for a storage account, and any other Microsoft PaaS that supports private endpoints. The key thing to understand is the Private Endpoint facilitates connectivity to a single instance of the service.

- Private DNS Zone Group – The Private DNS Zone Group resource establishes a relationship between the Private Endpoint and an Azure Private DNS Zone automating the lifecycle of the A record(s) registered within the zone. You may not be familiar with this resource if you’ve only used the Azure Portal.

- Azure Private DNS Zone – Each Azure PaaS service has a specific namespace or namespaces it uses for Private Endpoints.

An example of the components involved with a Private Endpoint for the blob endpoint for an Azure Storage Account would be similar to what pictured below.

I’ll now walk through some scenarios to understand how these components work together.

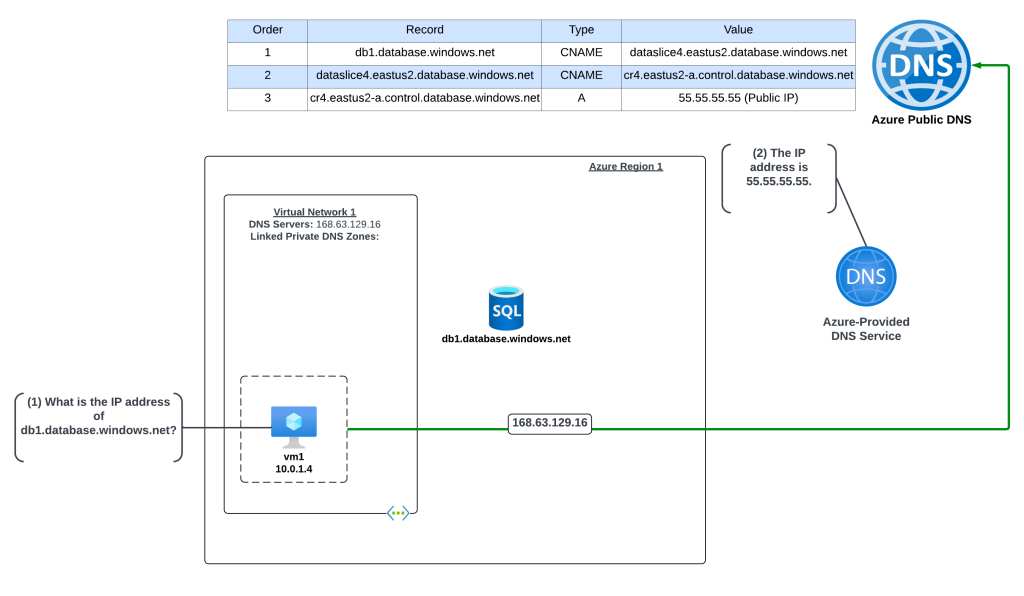

Scenario 1 – Default DNS Pattern Without Private Link Endpoint with a single virtual network

In this example an Azure virtual machine needs to resolve the name of an Azure SQL instance named db1.database.windows.net. No Private Endpoint has been configured for the Azure SQL instance and the VNet is configured to use the 168.63.129.16 virtual IP and Azure-provided DNS.

The query resolution is as follows:

- VM1 creates a DNS query for db1.database.windows.net. VM1 does not have a cached entry for it so the query is passed on to the DNS Server configured for the operating system. The virtual network DNS Server settings has be set to to the default of the virtual IP of 168.63.129.16 and pushed to the VNI by the Azure DHCP Service . The recursive query is sent to the virtual IP and passed on to the Azure-provided DNS service.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace and the public IP 55.55.55.55 of the Azure SQL instance is returned.

Scenario 2 – DNS Pattern with Private Link Endpoint with a single virtual network

In this example an Azure virtual machine needs to resolve the name of an Azure SQL instance named db1.database.windows.net. A Private Endpoint has been configured for the Azure SQL instance and the VNet is configured to use the 168.63.129.16 virtual IP which will use Azure-provided DNS. An Azure Private DNS Zone named privatelink.database.windows.net has been created and linked to the machine’s virtual network. Notice that a new CNAME has been created in public DNS named db1.privatelink.database.windows.net.

The query resolution is as follows:

- VM1 creates a DNS query for db1.database.windows.net. VM1 does not have a cached entry for it so the query is passed on to the DNS Server configured for the operating system. The virtual network DNS Server settings has be set to to the default of the virtual IP of 168.63.129.16 and pushed to the VNI by the Azure DHCP Service . The recursive query is sent to the virtual IP and passed on to the Azure-provided DNS service.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace. During resolution the CNAME of privatelink.database.windows.net is returned. The Azure-provided DNS service checks to see if there is an Azure Private DNS Zone named privatelink.database.windows.net linked to the virtual network and determines there is. The query is resolved to the private IP address of 10.0.2.4 of the Private Endpoint.

Scenario 2 Key Takeaway

The key takeaway from this scenario is the Azure-provided DNS service is able to resolve the query to the private IP address because the virtual network zone link is established between the virtual network and the Azure Private DNS Zone. The virtual network link MUST be created between the Azure Private DNS Zone and the virtual network where the query is passed to the 168.63.129.16 virtual IP. If that link does not exist, or the query hits the Azure-provided DNS service through another virtual network, the query will resolve to the public IP of the Azure PaaS instance.

Great, you understand the basics. Let’s apply that knowledge to enterprise scenarios.

Scenario 3 – Azure-to-Azure resolution of Azure Private Endpoints

First up I will cover resolution of Private Endpoints within Azure when it is one Azure service talking to another in a typical enterprise Azure environment with a centralized DNS service.

Scenario 3a- Azure-to-Azure resolution of Azure Private Endpoints with a customer-managed DNS service

First I will cover how to handle this resolution using a customer-managed DNS service running in Azure. Customers may choose to do this over the Private DNS Resolver pattern because they have an existing 3rd-party DNS service (InfoBlox, BlueCat, etc) they already have experience on.

In this scenario the Azure environment has a traditional hub and spoke where there is a transit network such as a VWAN Hub or a traditional virtual network with some type of network virtual appliance handling transitive routing. The customer-managed DNS service is deployed to a virtual network peered with the transit network. The customer-managed DNS service virtual network has a virtual network link to the Private DNS Zone for privatelink.database.windows.net namespace. An Azure SQL instance named db1.database.windows.net has been deployed with a Private Endpoint in a spoke virtual network. An Azure VM has been deployed to another spoke virtual network and the DNS server settings of the virtual network has been configured with the IP address of the customer-managed DNS service.

Here, the VM running in the spoke is resolving the IP address of the Azure SQL instance private endpoint.

The query resolution path is as follows:

- VM1 creates a DNS query for db1.database.windows.net. VM1 does not have a cached entry for it so the query is passed on to the DNS Server configured for the operating system. The virtual network DNS Server settings has be set to 10.1.0.4 which is the IP address of the customer-managed DNS service and pushed to the virtual network interface by the Azure DHCP Service . The recursive query is passed to the customer-managed DNS service over the virtual network peerings.

- The customer-managed DNS service receives the query, validates it does not have a cached entry and that it is not authoritative for the database.windows.net namepsace. It then forwards the query to its standard forwarder which has been configured to the be the 168.63.129.16 virtual IP address for the virtual network in order to pass the query to the Azure-provided DNS service.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace. During resolution the CNAME of privatelink.database.windows.net is returned. The Azure-provided DNS service checks to see if there is an Azure Private DNS Zone named privatelink.database.windows.net linked to the virtual network and determines there is. The query is resolved to the private IP address of 10.0.2.4 of the Private Endpoint.

Scenario 3b – Azure-to-Azure resolution of Azure Private Endpoints with the Azure Private DNS Resolver

In this scenario the Azure environment has a traditional hub and spoke where there is a transit network such as a VWAN Hub or a traditional virtual network with some type of network virtual appliance handling transitive routing. An Azure Private DNS Resolver inbound and outbound endpoint has been deployed into a shared services virtual network that is peered with the transit network. The shared services virtual network has a virtual network link to the Private DNS Zone for privatelink.database.windows.net namespace. An Azure SQL instance named db1.database.windows.net has been deployed with a Private Endpoint in a spoke virtual network. An Azure VM has been deployed to another spoke virtual network and the DNS server settings of the virtual network has been configured with the IP address of the Azure Private DNS Resolver inbound endpoint IP.

Here, the VM running in the spoke is resolving the IP address of the Azure SQL instance private endpoint.

- VM1 creates a DNS query for db1.database.windows.net. VM1 does not have a cached entry for it so the query is passed on to the DNS Server configured for the operating system. The virtual network DNS Server settings has be set to 10.1.0.4 which is the IP address of the Azure Private DNS Resolver Inbound Endpoint IP and pushed to the virtual network interface by the Azure DHCP Service . The recursive query is passed to the Azure Private DNS Resolver Inbound Endpoint via the virtual network peerings.

- The inbound endpoint receives the query and passes it into the virtual network through the outbound endpoint which passes it on to the Azure-provided DNS service through the 168.63.129.16 virtual IP.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace. During resolution the CNAME of privatelink.database.windows.net is returned. The Azure-provided DNS service checks to see if there is an Azure Private DNS Zone named privatelink.database.windows.net linked to the virtual network and determines there is. The query is resolved to the private IP address of 10.0.2.4 of the Private Endpoint.

Scenario 3 Key Takeaways

- When using the Azure Private DNS Resolver, there are a number of architectural patterns for both the centralized model outlined here and a distributed model. You can reference this post for those details.

- It’s not necessary to link the Azure Private DNS Zone to each spoke virtual network as long as you have configured the DNS Server settings of the virtual network to the IP address of your centralized DNS service which should be running in a virtual network which has virtual network links to all of the Azure Private DNS Zones used for PrivateLink.

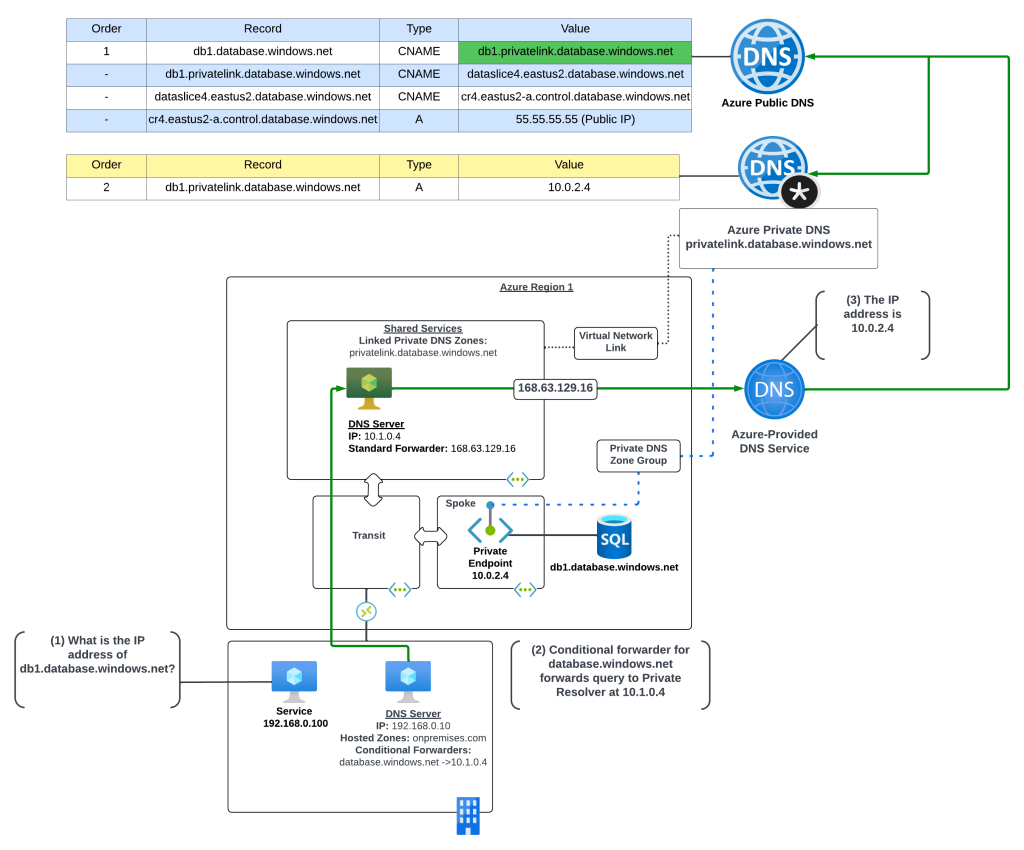

Scenario 4 – On-premises resolution of Azure Private Endpoints

Let’s now take a look at DNS resolution of Azure Private Endpoints from on-premises machines. As I’ve covered in past posts Azure Private DNS Zones are only resolvable using the Azure-provided DNS service which is only accessible through the 168.63.129.16 virtual IP which is not reachable outside the virtual network. To solve this challenge you will need an endpoint within Azure to proxy the DNS queries to the Azure-provided DNS service and connectivity from-premises into Azure using Azure ExpressRoute or a VPN.

Today you have two options for the DNS proxy which include bringing your own DNS service or using the Azure Private DNS Resolver. I’ll cover both for this scenario.

Scenario 4a – On-premises resolution of Azure Private Endpoints using a customer-managed DNS Service

In this scenario the Azure environment has a traditional hub and spoke where there is a transit network such as a VWAN Hub or a traditional virtual network with some type of network virtual appliance handling transitive routing. The customer-managed DNS service is deployed to a virtual network peered with the transit network. The customer-managed DNS service virtual network has a virtual network link to the Private DNS Zone for privatelink.database.windows.net namespace. An Azure SQL instance named db1.database.windows.net has been deployed with a Private Endpoint in a spoke virtual network.

An on-premises environment is connected to Azure using an ExpressRoute or VPN. The on-premises DNS service has been configured with a conditional forwarder for database.windows.net which points to the customer-managed DNS service running in Azure.

The query resolution path is as follows:

- The on-premises machine creates a DNS query for db1.database.windows.net. After validating it does not have a cached entry it sends the DNS query to the on-premises DNS server which is configured as its DNS server.

- The on-premises DNS server receives the query, validates it does not have a cached entry and that it is not authoritative for the database.windows.net namespace. It determines it has a conditional forwarder for database.windows.net pointing to 10.1.0.4 which is the IP address of the customer-managed DNS service running in Azure. The query is recursively passed on to the customer-managed DNS service via the ExpressRoute or Site-to-Site VPN connection

- The customer-managed DNS service receives the query, validates it does not have a cached entry and that it is not authoritative for the database.windows.net namepsace. It then forwards the query to its standard forwarder which has been configured to the be the 168.63.129.16 virtual IP address for the virtual network in order to pass the query to the Azure-provided DNS service.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace. During resolution the CNAME of privatelink.database.windows.net is returned. The Azure-provided DNS service checks to see if there is an Azure Private DNS Zone named privatelink.database.windows.net linked to the virtual network and determines there is. The query is resolved to the private IP address of 10.0.2.4 of the Private Endpoint.

Scenario 4b – On-premises resolution of Azure Private Endpoints using Azure Private DNS Resolver

Now let me cover this pattern when using the Azure Private DNS Resolver. I’m going to assume you have some basic knowledge of how the Azure Private DNS Resolver works and I’m going to focus on the centralized model. If you don’t have baseline knowledge of the Azure Private DNS Resolver or you’re interested in the distributed mode and the pluses and minuses of it, you can reference this post.

In this scenario the Azure environment has a traditional hub and spoke where there is a transit network such as a VWAN Hub or a traditional virtual network with some type of network virtual appliance handling transitive routing. The Private DNS Resolver is deployed to a virtual network peered with the transit network. The Private DNS Resolver virtual network has a virtual network link to the Private DNS Zone for privatelink.database.windows.net namespace. An Azure SQL instance named db1.database.windows.net has been deployed with a Private Endpoint in a spoke virtual network.

An on-premises environment is connected to Azure using an ExpressRoute or VPN. The on-premises DNS service has been configured with a conditional forwarder for database.windows.net which points to the Private DNS Resolver inbound endpoint.

The query resolution path is as follows:

- The on-premises machine creates a DNS query for db1.database.windows.net. After validating it does not have a cached entry it sends the DNS query to the on-premises DNS server which is configured as its DNS server.

- The on-premises DNS server receives the query, validates it does not have a cached entry and that it is not authoritative for the database.windows.net namespace. It determines it has a conditional forwarder for database.windows.net pointing to 10.1.0.4 which is the IP address of the inbound endpoint for the Azure Private DNS Resolver running in Azure. The query is recursively passed on to the inbound endpoint over the ExpressRoute or Site-to-Site VPN connection

- The inbound endpoint receives the query and passes it into the virtual network through the outbound endpoint which passes it on to the Azure-provided DNS service through the 168.63.129.16 virtual IP.

- The Azure-provided DNS services checks to see if there is an Azure Private DNS Zone named database.windows.net linked to the virtual network. Once it validates it does not, the recursive query is resolved against the public DNS namespace. During resolution the CNAME of privatelink.database.windows.net is returned. The Azure-provided DNS service checks to see if there is an Azure Private DNS Zone named privatelink.database.windows.net linked to the virtual network and determines there is. The query is resolved to the private IP address of 10.0.2.4 of the Private Endpoint.

Scenario 4 Key Takeaways

The key takeaways from this scenario are:

- You must setup a conditional forwarder on the on-premises DNS server for the PUBLIC namespace of the service. While using the privatelink namespace may work with your specific DNS service based on how the vendor has implemented, Microsoft recommends using the public namespace.

- Understand the risk you’re accepting with this setup. All DNS resolution for the public namespace will now be sent up to the Azure Private DNS Resolver or customer-managed DNS service. If your connectivity to Azure goes down, or those DNS components are unavailable, your on-premises endpoints may start having failures accessing websites that are using Azure services (think images being pulled from an Azure storage account).

- If your on-premises DNS servers use non-RFC1918 address space, you will not be able to use scenario 3b. The Azure Private DNS Resolver inbound endpoint DOES NOT support traffic received from non-RFC1918 address space.

Other Gotchas

Throughout these scenarios you have likely observed me using the public namespace when referencing the resources behind a Private Endpoint (example: using db1.database.windows.net versus using db1.privatelink.database.windows.net). The reason for doing this is because the certificates for Azure PaaS services does not include the privatelink namespace in the certificate provisioned to the instance of the service. There are exceptions for this, but they are few and far between. You should always use the public namespace when referencing a Private Endpoint unless the documentation specifically tells you not to.

Let me take a moment to demonstrate what occurs when an application tries to access a service behind a Private Endpoint using the PrivateLink namespace. In this scenario there is a virtual machine which has been configured with proper resolution to resolve Private Endpoints to the appropriate Azure Private DNS Zone.

Now I’ll attempt to make an HTTPS connection to the Azure Key Vault instance using the PrivateLink namespace of privatelink.vaultcore.azure.net. In the image below you can see the error returned states the PrivateLink namespace is not included in the subject alternate name field of the certificate presented by the Azure Key Vault instance. What this means is the client can’t verify the identity of the server because the identities presented in the certificate doesn’t match the identity that was requested. You’ll often see this error as a certificate name mismatch in most browsers or SDKs.

Final Thoughts

There are some key takeaways for you with this post:

- Know your DNS resolution path. This is absolutely critical when troubleshooting Private Endpoint DNS resolution.

- Always check your zone links. 99% of the time you’re going to be used the centralized model for DNS described in this post. After you verify your DNS resolution path, validate that you’ve linked the Private DNS Zone to your DNS Server / Azure Private DNS Resolver virtual network.

- Create your on-premises conditional forwarders for the PUBLIC namespaces for Azure PaaS services, not the Private Link namespace.

- Call your services behind Private Endpoints using the public hostname not the Private Link hostname. Using the Private Link hostname will result in certificate mismatches when trying to establish secure sessions.

- Don’t go and link your Private DNS Zone to every single virtual network. You don’t need to do this if you’re using the centralized model. There are very rare instances where the zone must be linked to the virtual network for some deployment check the product group has instituted, but that is rare.

- Centralize your Azure Private DNS Zones in a single subscription and use a single zone for each PrivateLink service across your environments (prod, non-prod, test, etc). If you try to do different zones for different environments you’re going to run into challenges when providing on-premises resolution to those zones because you now have two authorities for the same namespace.

Before I close out I want to plug a few other blog posts I’ve assembled for Private Endpoints which are helpful in understanding the interesting way they work.

- This post walks through the interesting routes Private Endpoints inject in subnet route tables. This one is important if you have a requirement to inspect traffic headed toward a service behind a Private Endpoint.

- This post covers how Network Security Groups work with Private Endpoints and some of the routing improvements that were recently released to help with inspection requirements around Private Endpoints.

Thanks!

Fantastic post, thanks. I have on-premises Active Directory Domain Controllers that each run AD-Integrated DNS. Does this sound like a possible solution to you?

1. Extend my AD into the cloud by creating Azure VM’s and setting up AD + AD-DNS on them.

2. Configure conditional forwarders for privatelink domains on internal DC’s to point to Azure DC’s.

3. Configure conditional forwarders for privatelink domains on Azure DC’s to point to 168.63.129.16

LikeLike

Hi Nathan. That looks like it should work. The one think you’ll want to plan around is ensuring that the conditional forwarders aren’t Active Directory integrated, or else they’ll be replicated as part of the directory data. That would inhibit your ability to have a different set of conditional forwarders across DCs in the same domain.

I’m fairly certain you can control that on a per conditional forwarder basis, but it’s been a long time since I’ve played around it.

LikeLike

I got it working. I created a couple of new DC/DNS servers in Azure. I did not bother with Conditional Forwarders on these, I simply set 1 server-level forwarder and pointed it to 168.63.129.16. Then I configured conditional forwarders on my on-premises DC/DNS servers. I made sure these conditional forwarders were not replicated in my domain, and pointed them to the Azure DC/DNS servers. At first I tried making the conditional forwarders for the privatelink domains, but that didn’t work as expected. So, I changed them to the public domains (azurewebsites.net, database.windows.net, etc.) and that did the trick.

LikeLiked by 1 person

Great to hear!

LikeLike

Hi, Great article here. I am in the scenario 4 situation, with an on-premise infoblox grid. Do you have any specifics on the DNS forwarder. I see some example templates running a linux machine, but no specifics on how to configure it afterward. https://azure.microsoft.com/en-us/resources/templates/301-dns-forwarder/

I’m wondering if it makes sense to make a DNS forwarder with windows instead, as well.

LikeLike

Hi there. My typical advice to customers is to use whatever DNS platform they’re comfortable with. If it’s Linux, throw together a BIND server. If it’s Microsoft, use a Microsoft server running the DNS service (or cheat and leverage an existing domain controller).

In your case where you have an existing InfoBlox grid, you may want to look at a tactical and strategic solution. Tactically, it sounds like Microsoft DNS is what you’re most comfortable with, so that might be good for the short term. Longer term you should begin planning on how you’re going to expand InfoBlox into Azure. Expand the existing grid or create a new one?

With Infoblox you have the Cadillac of DNS solutions, so no sense in driving the Corolla (Microsoft DNS / Bind DNS) for any longer than you need to.

Hope that helps!

LikeLike

Thanks for the write-up! I’m picking up my hair and putting it back on my head. One small correction: 168.61.129.16 should be 168.63.129.16

LikeLiked by 1 person

Thanks Matt! I’ll fix that typo.

LikeLike

I can not just create a direct zone in DNS / DC with the name privatelink.database.windows.net, and inside it create the record db1.privatelink.database.windows.net? I tested it in my environment and it worked.

Remembering that the DNS / DC have a Forwarder configured for Google’s DNS 8.8.8.8.

LikeLike

Hi Felipe. That will work with Windows DNS. This is due to an odd behavior where it will take the results of a recursive query returned by an upstream forwarder, iterate through the returned records looking for CNAMEs of namespaces it is authoritative for, and return the record from its local forward lookup zone. This behavior is specific to Windows DNS and does not work on all third-party DNS products. That is why I did not list it.

LikeLike

It is possible to create a forward conditional with the name of the storage account: storageteste.blob.windows.net, redirecting to 168.63.129.16, being a Windows DNS VM is inside the VNET with link to the Azure Private DNS (zone: privatelink.blob.windows.net)?

LikeLike

Yes, that is possible and I have tested it. You could also create a forward lookup zone with the FQDN of the storage account and create a single A record within the forward lookup zone with a blank name (to reference the parent) and the IP of the private endpoint. While both of these solutions work, there are a ton of negatives.

1. This will not scale for large deployments.

2. Creation/modification/deletion of a forward lookup zone or conditional forwarder is a server-level change and thus presents more risk if a mistake is made.

3. This works in Windows DNS but I’m not sure this will work in 3rd party DNS products.

4. Specific to Active Directory-integrated DNS, in large deployments that are spinning up and spinning down resources, at a large scale this could begin bloating the Active Directory DIT to the point there are performance impacts within AD.

I would not recommend this pattern in a production environment.

LikeLike

I tested in my environment creating a zone called storageteste.blob.windows.net with a blank type A record pointing to the private IP of the private endpoint and it worked.

Also test creating a zone called storageteste.privatelink.blob.windows.net with a blank type A record pointing to the private IP of the private endpoint and it worked.

Windows DNS has a Google DNS forwarder only (8.8.8.8).

What would be the difference between the 2 modes?

LikeLike

There is no real difference beyond the second scenario is specific to DNS products that exhibit the behavior I described above. If you opted to move away from Windows DNS to a third-party DNS product, you might not experience the same result.

LikeLike

So, as I understand it, I could use scenario 1 for both Windows and Linux, that is, creating a zone with the name of my storage account storageteste.blob.windows.net and pointing to the Private IP of Private Link.

With this method, you would be skipping the following DNS hops:

CNAME storageteste.privatelink.file.core.windows.net

CNAME file.cq2prdstr04a.store.core.windows.net

No problem then, skipping these jumps?

LikeLike

I can’t say whether the first method would work for DNS products like BIND, you would need to test to confirm. In the theory method one should work.

On the second question, you are theoretically already skipping those records in the normal pattern of using Azure Private DNS since the query it intercepted at the second CNAME and forwarded to the private DNS zone. You would want to contact Microsoft support to get an authoritative answer.

LikeLike

This doesn’t work across multiple subscriptions. Otherwise, great article.

See: https://github.com/MicrosoftDocs/azure-docs/issues/53153

LikeLike

Hi Tom. I read through your post on the Github issue, but can you describe it further. If I understand correctly, you are saying you are unable to create a Private Endpoint in SubscriptionA and have it register its A record in a Private DNS Zone in SubscriptionB. Is this an accurate description?

LikeLike

If this is your use case, this is actually possible. In the portal when you toggle the option to integrate with Private DNS, you’ll see a drop down menu for subscription. You would then select the appropriate zone from the subscription. To ensure this feature wasn’t broken, I tested it a few minutes ago using both the Portal experience and an ARM template.

Below is an ARM template I wrote that you can try that demonstrates this same concept.

https://github.com/mattfeltonma/arm-templates/tree/master/examples/private-endpoint-existing-private-dns

LikeLiked by 1 person

Ahhh, this makes a lot more sense. So the way to do this is a) pre-create the matching required private DNS zone(s) matching the azure private link domains used for a particular service and b) create a private endpoint as a standalone resource to enable linking it back to those central existing zones. Thanks Matt! Note that when you are creating a brand new private link enabled service such as ACR, there is no option to find an existing zone in another subscription. That’s really the problem I was trying to raise here. Looking at the ARM API docs, it looks like that `privateEndpointResourceId` is the key field. The portal does not expose this during the “create resource wizard”.

None of the documentation explains this use case so it’s been a frustrating process to try and find out how to do this. Your blog post definitely helps illustrate the integration scenarios well – I just couldn’t figure out how to get the damn DNS record into the right zone in a predictable way!

Thanks!

LikeLiked by 1 person

Awesome! Glad it helped and cleared it up.

I agree there is a lot of room for improvement in the public documentation for Private DNS and Private Endpoints. I try to look a the bright side in that it gives me something to write about. 🙂

LikeLike

Disclaimer: I am not a networking expert.

I think you have addressed this already here – “The first reason is it allows clients accessing to instance through a public IP to continue to do so because Microsoft has established a split-brain DNS configuration for the privatelink.database.windows.net zone.”

But wanted to understand it in simpler terms.

I am trying to set up SQL Azure with Private Link, so that my new on-prem applications can access the database via the already established Express Route.

But there are other existing on-prem applications using SQL Azure without Private Link.

Am I right in assuming that configuring the DNS as per Scenario #2 (or other scenarios) may cause the applications using public FQDN to connect with SQL Azure to fail?

LikeLike

Hi Fred. Your scenario may be challenging to make work. If you want your on-premises workloads to be able to resolve Private Link IP addresses, you typically have to configure your underlining DNS infrastructure to support that resolution path. Typically this is done by configuring on-premises DNS servers to forward traffic for the public zone, such as blob.windows.core.net, to a DNS resolver in Azure. The challenge is it’s typically a binary decision that affects all machines on-premises.

To make this work you’d need to have a different resolution path for this subset of machines that need to resolve to the Private Link IP address. This could be via HOSTS files configured directly on the machines (for testing purposes) or a whole separate DNS infrastructure dedicated to this type of resolution. My recommendation would be to make an organizational decision on how you’re going to handle PaaS services in the future. Personal opinion, you shouldn’t mix the two.

LikeLike

This is really a very helpful blog post. I am not a networking expert and have one query around private DNS zones.

Let’s say if an organisation have its own DNS infrastructure and they are creating A records for db1.database.windows.net to resolve to 10.0.0.2 (private endpoint for SQL), in this case, we don’t actually need a private DNS zone in Azure with privatelink.database.windows.net. Correct?

LikeLike

Hi Gary. It sounds like you’re saying an organization has decided to create a split brain DNS scenario. In this scenario a DNS namespace is resolvable publicly, but the organization has decided to also create a non-public DNS namespace which they are hosting authoritatively within their own infrastructure.

Technically, the scenario you are describing would work. If any organization decided to host a private database.windows.net DNS zone or a DNS zone named db1.database.windows.net resolution of the records would likely resolve to the values in the A records they create (this could vary on different DNS platforms). There are a few downfalls to this. Creating a split brain DNS scenario for a zone you don’t own could introduce weird issues where that zone is used for other 3rd party services (this is especially true with PaaS zones). If you decided to create a zone to reflect a single record (like a zone for db1.database.windows.net) the downfall there is it’s somewhat of an anti-pattern which could be problematic when troubleshooting problems and would also introduce additional operational overhead of maintaining those records at scale.

LikeLike

Hi Matt,

Would a reverse proxy / app gateway performing ssl termination work with scenario 6?

Thanks,

Jon

LikeLiked by 1 person

Hi Jon.

Funny you mention that because a peer and I were just talking about that pattern a few weeks ago. I haven’t tested it myself, but we were fairly certain it would work to get around the custom domain limitation at least for HTTP/HTTPS endpoints. Nothing would be stopping you from tossing in a 3rd party reverse proxy like an F5 LTM to handle the non-HTTP/HTTPS traffic.

That topic actually lead into another topic during our discussion which pops up fairly often with Private Endpoints, and that’s multi-region / DR scenarios. Microsoft doesn’t give great guidance on how to handle DR/HA scenarios with Private Endpoints, largely because it’s a gap in Azure’s DNS offerings. Azure DNS is super simplistic from a DNS service perspective and doesn’t offer any type of probing. Traffic Manager and Front Door are public endpoint only, which creates a gaping hole for internally-facing application.

I’m rambling a bit, but the gist of it if you’re going to solve the problem for custom domains, you may as well incorporate a product that can also solve the GSLB solution in the mix to solve both problems. For example, using an F5 LTM/GTM combo.

Either way, you are right on track with potential workarounds for custom domains. Maybe I’ll make it a future blog post. 🙂

LikeLike

Helpful post thank you. My team and I were wondering about DR etc if we private endpoint all the things – in the event of vnet failure we lock ourselves out. I reached out to Microsoft and they recommended the vnet and private endpoint be deployed somewhere else as well (perhaps the B region) with vnet peering so there was another route in, advice that seems reasonable enough to take.

I was also wondering if you had any insight on best practice for private DNS zones. If we looked at privatelink.database.windows.net and we had Dev/Prod/Live subscriptions with separate vnets and Azure SQL servers in all three, would you create one main privatelink.database.windows.net zone that contained all records and was linked to multiple vnets or would you think a single zone per vnet would be more appropriate?

Many thanks for any insight you might have!

Dan

LikeLike

Hi Dan,

I’m a fan of maintaining a single authoritative zone for each private link namespace. The zone is then linked to VNet containing your DNS proxy device. The zone itself is treated as production, so automation is important to ensure lower environments aren’t inhibited by a more tightly controlled resource.

The challenge with doing separate zones has to deal with on-premises resolution requirements. If you have resources on-premises or in another cloud that have to resolve those private DNS zones, you need one authority to point those queries to. If you have separate zones for each environment, you’ll be in a bind.

If you don’t have requirements for resources outside of Azure to resolve records in those zones, you could create separate namespaces for dev/way/prod/etc I suppose. My concern would be unwinding that configuration if new requirements arrive in the future.

LikeLike

Pingback: Private Link and Azure Monitor: what is an AMPLS? – Cloudtrooper

Thanks for replying.

Just to quickly clarify on my first thing the peering isn’t necessary apparently (https://docs.microsoft.com/en-us/azure/dns/dns-faq-private#will-azure-private-dns-zones-work-across-azure-regions-)

Regarding the single zone, I like that too it seems much easier to manage and I don’t see any harm in our internal envs being able to know about IPs in our Live env if all they can do is query them.

We’re Azure cloud native so no on-prem or alternate providers to worry about.

Thanks again for your insight.

Dan

LikeLiked by 1 person

Pingback: Azure Private Endpoint, Private Link – HAT's Blog

Hi Matt,

Thank you for the great articles you have!

I have read them but still have an issue.

I have Azure Vnet 10.6.0.0/16 with several servers VMs accessing Azure SQL using dbname.database.windows.net DNS name. Servers are part of the domain and we use AADDS with two DNS servers 10.0.0.4 and 10.0.0.5.

I have created Azure SQL Private endpoint with the IP 10.6.0.8 and FQDN dbname.privatelink.database.windows.net.

Private DNS zone “privatelink.database.windows.net” created and has A record for my “dbname” and its 10.6.0.8 IP.

When I run SQL connectivity test provided by Microsoft from the VM server – it works but says: “This server has a private link alias but DNS is resolving to a regular Gateway IP address, running public endpoint tests.”

When I do “nslookup dbname.database.windows.net” it returns me:

>nslookup dbname.database.windows.net

Server: lrmapyg3q9-3j53.internal.cloudapp.net

Address: 10.0.0.4

Non-authoritative answer:

Name: cr5.eastus1-a.control.database.windows.net

Address: 40.78.225.32

Aliases: dbname.database.windows.net

dbname.privatelink.database.windows.net

dataslice6.eastus.database.windows.net

dataslice6eastus.trafficmanager.net

Why the the FQDN is not resolved to the private link IP? Should something be done in AADDS DNS servers?

Thanks a lot for your help!

Best regards,

Kirill

LikeLike

Hi Krill,

It sounds like the Private DNS Zone may not have been linked to the virtual network DNS servers are in. I haven’t touched AADDS in about 4 years, but I believe it gets delegated a subnet in a VNet. You need to ensure the private dns zone is linked to that Vnet.

LikeLike

Hi Matt,

Thank you for your response!

I had my private DNS zone linked to the VNET which was different from AADDS VNET.

Once I added another link to AADDS VNET – everything started working as expected!!!

You are the best!

Much appreciated!

LikeLike

Awesome! Glad you got it working.

LikeLike

Thank you for the blog entry on DNS, it has been very helpful for helping me understand and troubleshoot.

LikeLike